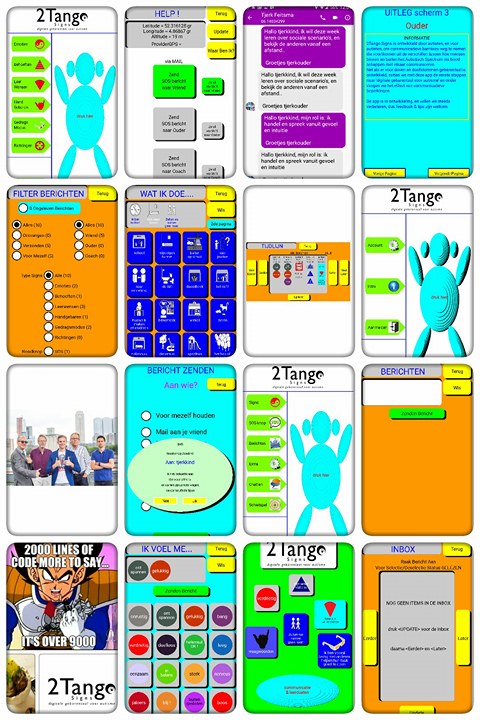

03 2017 - Early stage prototype selfmanagement app

The first prototypes of the ‘tapping’ functionality of the Signs language, are forged by developer Kees. Made in java, it is focussed on self-reporting and autism-friendly styled sentence formation. The prototype works, and is fairly effective, but is not ‘dynamic’ in its tracking. To be used by millions this needs to be improved, and part of it is because it doesn’t learn quickly enough from what the individual user needs, before the need for communication arises in a situation. The teams grows in size to tackle this problem.

Later in the research effort, we investigate different types of individuals with ASS, TOS, and neurological variances that lead to limited speech. We pivot in our small group of Signs-users to a wider net, and completely overhaul our system, as to include other neurodivergent groups that could really use the technology we are building. The main focus remains autisme-friendly communication, but over time we understand that we can help more persons, with issues in communication that seem similar to issues autistics face daily.